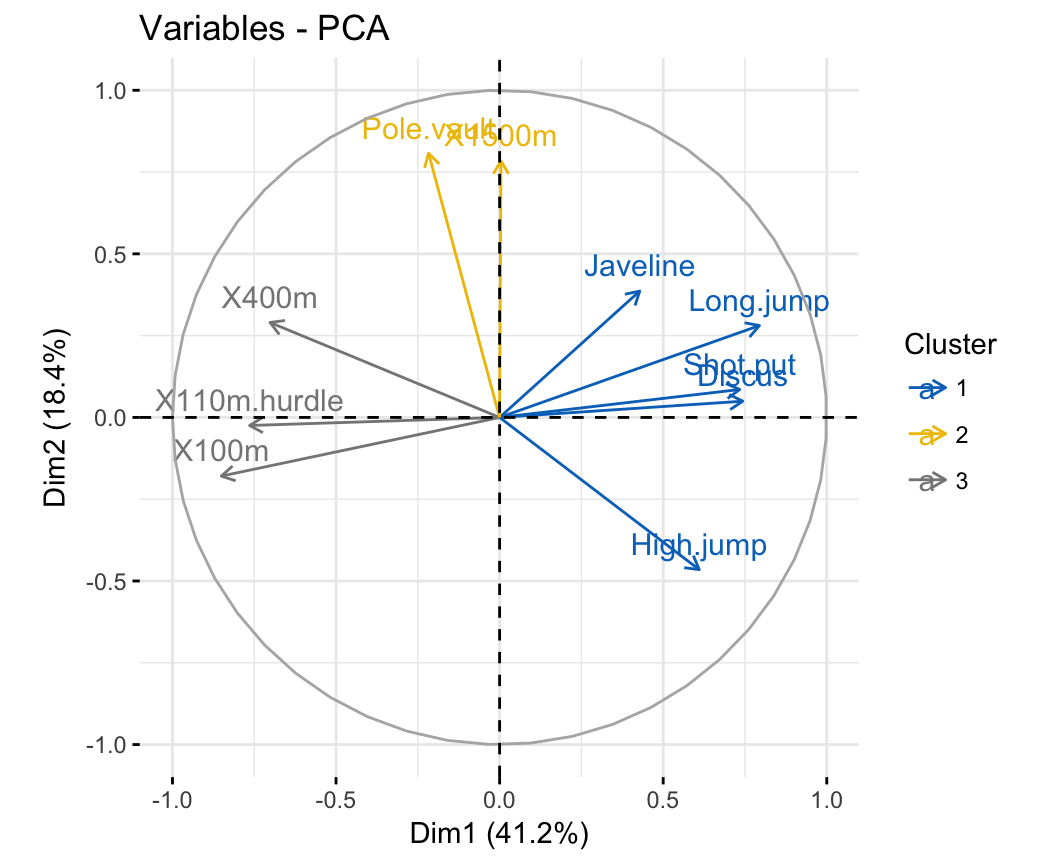

Going deeper into PC space may therefore not required but the depth is optional. It is expected that the highest variance (and thus the outliers) will be seen in the first few components because of the nature of PCA. This basically means that we compute the chi-square tests across the top n_components (default is PC1 to PC5). To detect any outliers across the multi-dimensional space of PCA, the hotellings T2 test is incorporated. biplot3d ( n_feat = 10, legend = False ) Here we see the nice addition of the expected f3 in the plot in the z-direction. biplot ( n_feat = 10, legend = False )īiplot in 3d. This is expected because most of the variance is in f1, followed by f2 etc. It can be nicely seen that the first feature with most variance (f1), is almost horizontal in the plot, whereas the second most variance (f2) is almost vertical.

The results show that f1 is best, followed by f2 etc print ( out ) # PC feature # 0 PC1 f1 # 1 PC2 f2 # 2 PC3 f3 # 3 PC4 f4 # 4 PC5 f5 # 5 PC6 f6 # 6 PC7 f7 # 7 PC8 f8 # 8 PC9 f9 Make the plots model. fit_transform ( X ) # Print the top features. DataFrame ( data = X, columns = ) # Initialize model = pca () # Fit transform out = model. randint ( 0, 1, 250 ) # Combine into dataframe X = np. # We want to extract feature f1 as most important, followed by f2 etc f1 = np. biplot ( model ) Example to extract the feature importance: # Import libraries import numpy as np import pandas as pd from pca import pca # Lets create a dataset with features that have decreasing variance. shape ) ( 150, 4 ) # In this case, PC1 is "removed" and the PC2 has become PC1 etc ax = pca. shape ) ( 150, 4 ) # Normalize out 1st component and return data model = pca () Xnew = model. This is usefull if the data is seperated in its first component(s) by unwanted or biased variance. Normalizing out the 1st and more components from the data. fit_transform ( X ) X looks like this: X=array(, model = pca ( n_components = 0.95 ) # Reduce the data towards 3 PCs model = pca ( n_components = 3 ) # Fit transform results = model. target ) # Load pca from pca import pca # Initialize to reduce the data up to the nubmer of componentes that explains 95% of the variance. Import pca package from pca import pca Load example data import numpy as np from sklearn.datasets import load_iris # Load dataset X = pd.

#Pca column download install#

Install the latest version from the GitHub source:.Creation of a new environment is not required but if you wish to do it:Ĭonda create - n env_pca python = 3.6 conda activate env_pca pip install numpy matplotlib sklearn Installation pip install pca.It is distributed under the MIT license.pca is compatible with Python 3.6+ and runs on Linux, MacOS X and Windows.